LHC Restart 2016

The Large Hadron Collider (LHC) and its experiments are back in action, now taking physics data at 13TeV. The goal is to improve our understanding of fundamental physics which ulitmately in decades to come can drive innovation and inventions by researchers in other fields. The Higgs was the last piece of the puzzle for the Standard Model. In 2016 the ATALS and CMS collaboration of the LHC will study this boson in depth and possibly pave the way for new discoveries. Over the next three to four months there is a need to verify the measurements of the Higgs properties taken in 2015 as well as check for hints of possible deviations from the Standard Model. Following a short commissioning period, the LHC operators will now increase the intensity of the beams so that the machine produces a larger number of collisions.

We have asked from the run coordinators of the four largest LHC experiments ALICE, ATLAS, CMS and LHCb to share with us few words about the first data taking from 2016. Their broad physics programme will be complemented by the measurements of three smaller experiments (TOTEM, LHCf and MoEDAL) that focus with enhanced sensitivity on specific measurements and may hold the key to new physics.

Read more at: http://phys.org/news/2016-05-cern-large-hadron-collider-protons.html#jCp

Read more at: http://phys.org/news/2016-05-cern-large-hadron-collider-protons.html#jCp

Read more at: http://phys.org/news/2016-05-cern-large-hadron-collider-protons.html#jCp

Read more at: http://phys.org/news/2016-05-cern-large-hadron-collider-protons.html#jCp

Read more at: http://phys.org/news/2016-05-cern-large-hadron-collider-protons.html#jCp

Read more at: http://phys.org/news/2016-05-cern-large-hadron-collider-protons.html#jCp

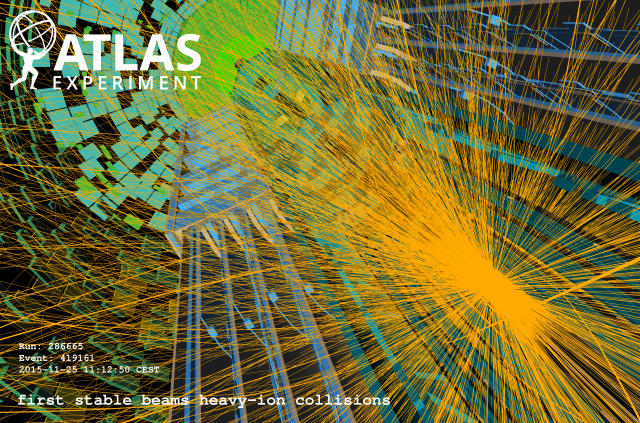

ATLAS

After a successful data taking campaign in 2015, ATLAS is back to observing LHC collisions with a performance which is expected to be the same - if not better - than that achieved last year. We have taken advantage of the yearly LHC technical stop to consolidate and improve our detector performance, and we are enthusiastically anticipating the luminosity the LHC will deliver in the coming months.

After a successful data taking campaign in 2015, ATLAS is back to observing LHC collisions with a performance which is expected to be the same - if not better - than that achieved last year. We have taken advantage of the yearly LHC technical stop to consolidate and improve our detector performance, and we are enthusiastically anticipating the luminosity the LHC will deliver in the coming months.

In 2015 data taking was smooth, with more than 87% of the collisions collected being deemed good simultaneously for all of our sub-detectors. In such a large system of course some headaches are always possible: in our case they came from the anomalous current absorption by the Insertable pixel B Layer sensors. These were diligently monitored and investigated by the pixel detector experts and in the end led only to the loss of "pixel good" status for two physics fills over all the data taking periods. Despite these issues, we concluded 2015 with a fraction of live channels per subdetector at the same level or - in many cases - better than what we had in Run I.

During the 2015-2016 shutdown we diligently maintained and improved our detectors. The main situation we faced was the substantial damage - observed during access - to one of the bellows at the top of the C-side forward toroid. The superconducting toroids are integral part of our muon spectrometer. The concern that a leak could develop brought us to organize and execute - with great support from the CERN technical teams - a repair campaign. This culminated with the embedding of the deformed bellow inside a larger bellow welded around it. The intervention was carried out very accurately and successfully, and our toroids were brought back in full health on time for LHC beams.

Significant upgrades were also deployed in ATLAS: we have a brand new forward proton detector (AFP) installed 220m down the tunnel from ATLAS, as well as several measures taken in order to cope with the more challenging pile-up and bandwidth requirements of 2016 LHC operations at 40 cm beta*. Small age-related issues have also been addressed throughout the ATLAS detector, and noise figures have been improved. The ATLAS Trigger and Data Acquisition system has been in part refurbished, with brand new processing units being deployed, and new exciting triggering capabilities being progressively enabled in the course of 2016.

Now physics production has begun, with the ATLAS subsystems having been re-commissioned with cosmic rays, early LHC collisions and the first "stable beams" data. Preliminary figures show a performance in terms of data taking efficiency and quality consistent with 2015. The even better news is that we see room for further improvement in several directions: while we will inevitably face harsher conditions as the LHC luminosity is being ramped up, everybody at Point 1 is working hard and relentlessly to make our data taking perform even better than last year. This is a challenge that we are all very eager to tackle from as many directions as possible, in order to maintain and even surpass the performance figures achieved in 2015.

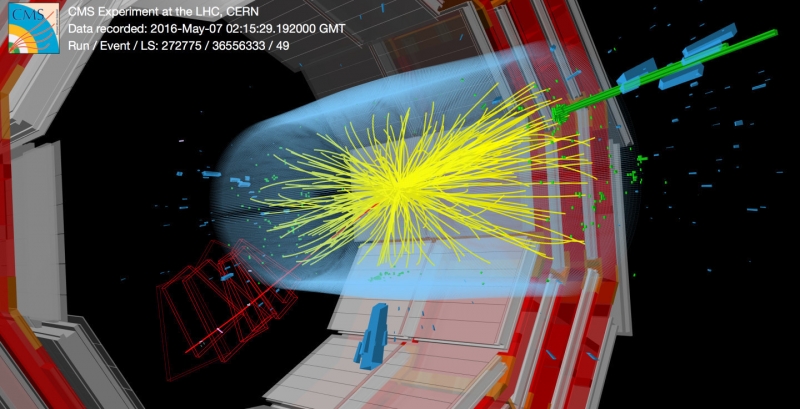

CMS

LHC Run 2 is in full swing, the data are beginning to pour in, and the CMS collaboration is reaping the benefits of hard work!

LHC Run 2 is in full swing, the data are beginning to pour in, and the CMS collaboration is reaping the benefits of hard work!

Run-1 was a phenomenal success at CERN, with all collider experiments making physics discoveries using the highest energy hadron collisions ever created by LHC. As might be imagined, this was no cakewalk--the physics came along with challenges. As an example, already in the third year of Run 1 the instantaneous luminosity delivered to CMS was at nearly the design value, while the luminosity per bunch crossing exceeded the design by about half. With the prospects of higher luminosity, 25ns bunch spacing, and high pileup at sqrt(s)=13 TeV, Run 2 promises to set the bar even higher to extract physics signals from an even more challenging environment.

To take advantage of the increased luminosity in Run 2 and in Run 3, CMS has focused the first phase of its upgrade program to improve the ability of the detector to detect rare physics signals in a high pileup environment. To better “separate the wheat from the chaff,” an additional layer of silicon tracking is being added, the granularity of the hadronic calorimeter is being refined, and the processing power of the Level-1 trigger system is being augmented.

To take advantage of the increased luminosity already in 2016, the production and installation schedule for these upgrades was designed to install the hardware during the Year-End Technical Stops both at the end of 2015 and the end of 2016. As a result, the 2016 collision run is the first with Phase-1 upgrade hardware in use at CMS, with the Hadronic Calorimeter upgrade partially in place and the Level-1 Trigger upgrade now fully functioning to collect data at point 5.

The design of the new trigger uses the increased bandwidth of optical fibers to pass high resolution information to large FPGAs. The FPGAs are mounted on electronics cards based on uTCA (micro Telecommunications Computing Architecture), with a small footprint and large processing power. The design enables higher level processing to be applied to each event, for example, to correct for pileup, improve the identification of taus, and better isolate electromagnetic objects.

Preparations to implement this technology began several years ago and a methodical approach was taken to deploy it in a systematic, staged manner. “Stage-1” of the upgrade trigger was deployed in 2015 and the full “Stage-2” of the trigger is now in place for 2016. This has guaranteed a fully operational trigger at all times with definitive improvements at each step.

The fruits of our labour are paying off now, with high quality data being collected at high rates. The data are carefully being scrutinized by collaborators, and the prospects are good that we will find whatever new physics Nature is holding for us!

From http://cds.cern.ch/record/2151078...

LHCb

After the winter shutdown, during which the LHCb detector was consolidated but where no major intervention took place, the experiment started the data collection campaign at the end of April when the LHC delivered the first collisions. In less than a week, enough data were collected to perform the initial calibration and alignment of the detector that is always needed after a long stop. Right after these technical activities, the accumulation of data for the physics analyses started. In the middle of May, LHCb also participated to the van der Meer scan runs in order to obtain precise luminosity calibration for the year 2016. This special run was also used to collect other types of collisions: collisions of the LHC proton beam with a Helium target injected directly in the LHCb interaction point. These data will be analyzed to perform a measurement of the production cross-section of anti-protons in proton-Helium collisions. This measurement will be an important input for the cosmological models of anti-particle productions and could be used to interpret the observations of the AMS experiment for example.

During year 2016, the LHCb experiment expects to collect about 1.5 fb-1 of data, which will represent a sample at least as large as the one collected during the entire Run 1. This is partly due to the increase of the beauty production cross-section, but not only. Thanks to the improvements made on the trigger software during the winter shutdown, in particular on the execution speed of the selection algorithms, the efficiency to collect interesting particle decays increased significantly. In addition, the detector is now fully calibrated and aligned online. This new important feature was developed and used already in 2015, and was ready since the first day of operation in 2016. It is also a crucial ingredient for the increase of trigger efficiencies. For the study of charm decays, the increase of statistics compared to Run 1 will be even larger.

The detector is operated since the beginning of the year from an entirely new control room, in a new building at Point 8. Thanks to the joint effort between the SMB, EN and TE CERN departments in collaboration with LHCb, this new facility provides excellent working conditions for the shift crews, composed as before of 2 persons, a shift leader and a data manager.

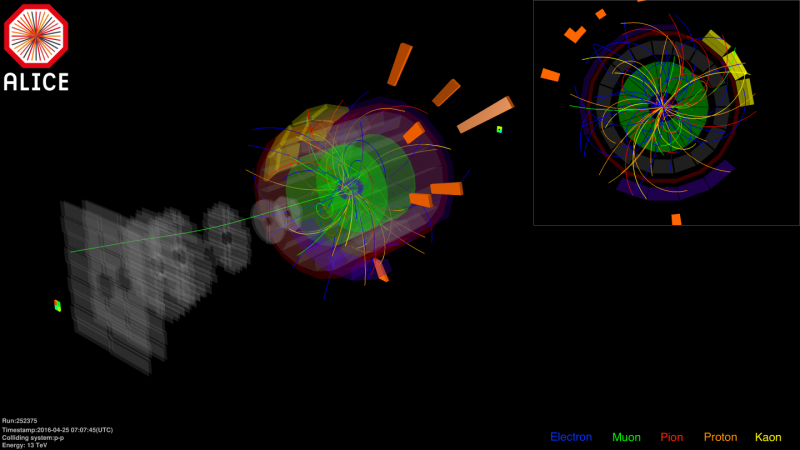

ALICE

In the early hours of 23 April when the Large Hadron Collider (LHC) operator team declared the first stable beams for this year and as soon as the luminosity reached the desired value at Interaction Point 2 (IP2) all participating detectors of ALICE joined in the data taking, harvesting the first proton-proton data at 13 TeV of Run 2 in 2016. After the first collisions with single pilot bunches the bunch intensity as well as the number of bunches inside the LHC was increased in an unprecedented way. Trigger selections during this period allowed for a successful and vigorous data taking campaign thus far resulting in a quarter of the planned minimum bias events as well as 15 million high multiplicity events to be collected already.

After completing its extensive commissioning which included the installation of 216 readout cards, the Time Projection Chamber (TPC) was ready to be included in the first runs. With the first data collected during stable beams the ALICE team was able to test the performance of the new readout cards of the TPC as well as to check the data quality after the replacement of the gas of the TPC. These specific runs were carried out at higher than normal collision rates during the intensity ramp up phase of the LHC allowing for maximum read out rates for the TPC which will also be encountered during the p-Pb run later in this year.

In order to collect more data for, among other, the production of low-mass particles the magnetic field of the solenoid was temporarily lowered from the nominal field of 0.5 T to 0.2 T. Almost 54 million of these minimum bias events were recorded under these conditions.

On 17 May the van der Meer scan for ALICE was performed successfully. Eight successive scans were performed over three-and-half hours. During the van der Meer scans of the other three experiments the special beam optics applicable to IP2 allowed for data taking, including also the Zero Degree Calorimeter (ZDC) detector, resulting in 15 million ultra-diffractive minimum bias events.

As the LHC is currently performing the last steps towards running at the maximum foreseen number of bunches ALICE is ready and gearing up to complete its proton-proton data taking at 13 TeV. At the same time the team is looking eagerly forward to joining the p-Pb campaign which will be carried out at 5 and 8 TeV, respectively, from mid-November to mid-December of this year.

The author would like to thank: Siegfried Förtsch (ALICE), Alex Cerri (ATLAS), Grek Rakness (CMS), Patrick Robbe (LHCb)