The LHC pilot beam at LHCb in preparation for Run 3 start

During the second long shutdown of the LHC, the LHCb experiment is undergoing a full metamorphosis to be able to collect an unprecedented data sample starting from next year [1]. This upgrade of LHCb has been designed to be able to operate at five times the instantaneous luminosity with respect to that in the previous decade of data taking, requiring the replacement of 80% of the sensitive detector channels. Moreover, to increase the flexibility and efficiency in the selection of events with hadronic final states, a brand new fully software trigger running on GPUs has been conceived: the upgraded LHCb experiment trigger will acquire full data at 40 MHz, the rate of LHC collisions. To achieve this ambitious plan a completely new readout system is needed: 100% of the detector front-end channels have been replaced as well as a new data centre [2] has been built to be able to process the impressive data flow of 4 TB/s, filtering it down to 10 GB/s.

The installation of the final elements of the new detector is ongoing and the LHC pilot beam provided a great opportunity to test the subsystems that were already finalised and to validate the new infrastructure to take data and process it. The sub-detectors taking part in the pilot beam data-taking were: the electromagnetic and hadronic calorimeters (ECAL and HCAL) , the Muon detector (MUON), the downstream Ring Imaging Cherenkov detector (RICH2) and the first elements of the Probe for LUminosity MEasurement (PLUME), a new luminometer designed specifically for the LHCb Upgrade. Amongst the participants to the beam test, RICH2 and PLUME were the two subsystems where new active elements had been installed during the past months. All the sub-detectors participating were equipped with new front-end electronics, able to operate at 40 MHz. All the detectors had to be connected to the LHCb Upgrade Data AcQuisition system (DAQ) in the new data centre, via 300 m long optical fibres. In the data centre custom boards are in charge of collecting data from the various detector elements. The gluing of all the outputs of the detectors is achieved with a new Event Builder. In addition to this, a common infrastructure to integrate the configuration, control and data taking for each subsystem was also provided by the LHCb Online Team and it is commonly referred to as Experiment Control System (ECS).

Moreover, the LHCb collaboration has developed a set of detectors in order to monitor the beam and machine induced background conditions around its interaction point. For the safe operation of the experiment, it is essential to quickly detect and react upon anomalous beam conditions around the experiment’s interaction region. The protection of the detector against adverse beam conditions is ensured by the Beam Conditions Monitor (BCM) system. The BCM system has been completely refurbished in preparation for Run3 and the upstream station was the first subsystem to observe activity for LHC during the first injection of the beam, on 19 October. Furthermore the operational test of the BCM was fully achieved by providing the beam interlock to LHC during the collisions period. Further monitoring of the beam conditions is provided by the Radiation Monitoring System (RMS), installed upstream of the collision point. The newly refurbished RMS was able to detect LHC activity starting from the first injection on 19 October, observing the first LHC “splashes”.

In the weeks leading to the LHC beam test, each sub-detector had to go through a common checklist as preparatory work in view of the collisions. This was a huge set of commissioning tasks, in order to bring the detector elements together with the new DAQ infrastructure and the ECS. Finally on 27 October, the first stable beam conditions were provided: each sub-detector, operating in standalone mode, could start hunting for the beam activity! With a bit of healthy competition the race was won by RICH2 (Figure 1).

Figure1. Beam activity detected by ECAL and HCAL: two colliding bunches in LHCb were observed with the correct spacing in bunch identity and the beam-gas activity can be observed at later BXID as well.

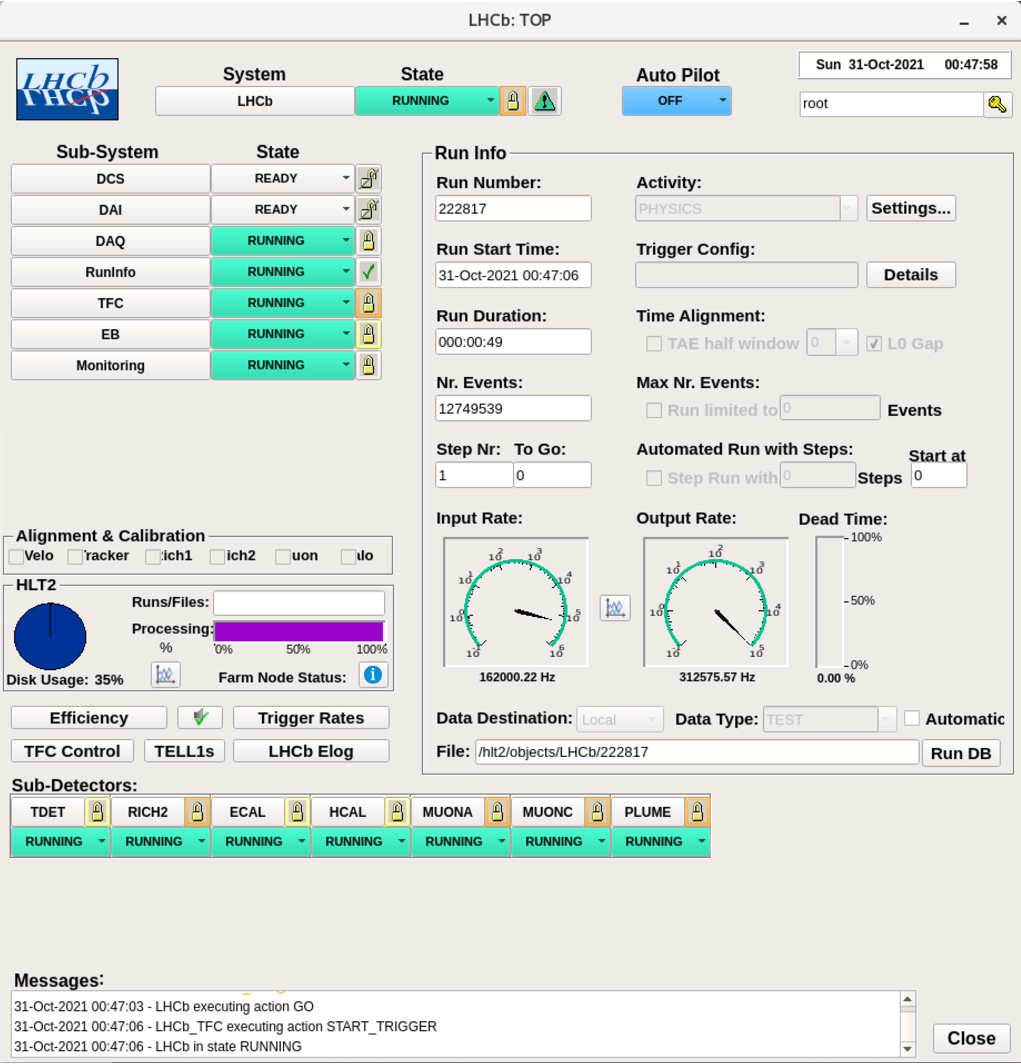

Following the excitement, the beam activity was detected by each subsystem in the following fills. Step by step, each of the LHCb sub-detector was aligned in time so that, instead of acquiring data independently, they could be operated centrally via the global LHCb ECS: the new LHCb Online system comprising the central DAQ, the Event Builder and the ECS was tested and validated (Figure 2).

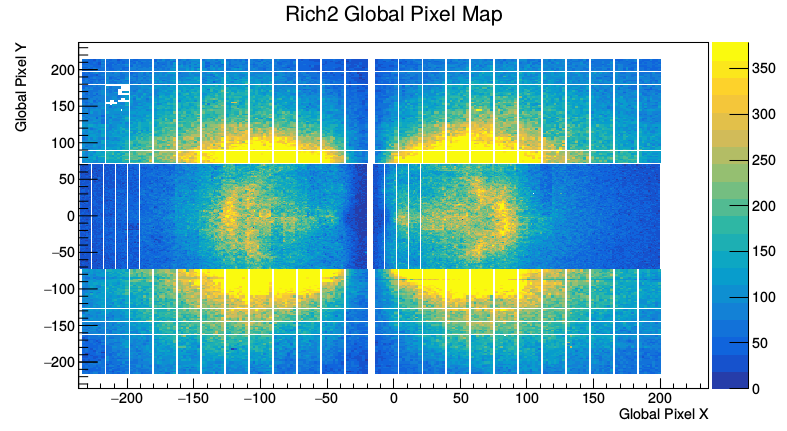

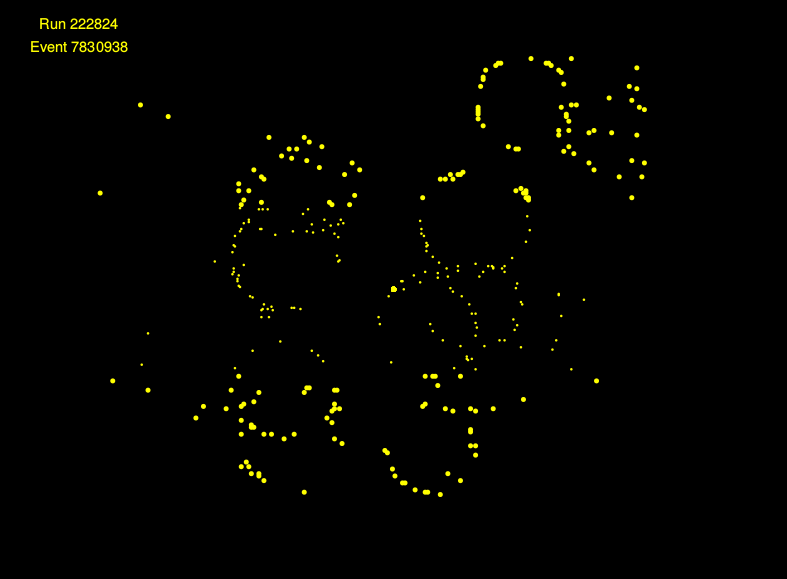

Following the first collisions, the LHCb programme focused on consolidating the overall stability of the readout system while fine tuning the parameters of the individual subsystems (Figure 3 and 4). A dedicated trigger, running on a set of brand new GPUs, based on the signal amplitude detected by the ECAL, was developed to fully exploit the beam time and have a first test of the new fully software dataflow.

Figure 2. A picture of the central LHCb ECS controlling all subsystems included in the LHC pilot beam test.

The four days of stable beams proved to be an excellent opportunity for the LHCb Upgrade to test the installed subsystems in realistic conditions. The new Online chain was validated and consolidated. A dedicated trigger line was developed to further test the dataflow.

Figure 3. Top: Cumulative hitmap of RICH2 acquired in stable beams conditions; bottom (Credits: LHCb Collaboration). Bottom: Single event display of the Cherenkov rings detected in RICH2.

Figure 4. Hitmap detected across the four muon stations: from M2, the closest to the collision point, to M5, the most downstream.

The successful week has now enabled the launch of a new campaign of global commissioning with cosmic rays to include newly completed detectors and validate the LHCb Upgrade in preparation for the start of Run 3. Even better, the period was excellent at building a real sense of community again, everybody involved had a lot of fun during the LHC pilot beam and LHCb is looking forward to taking data again soon.

Further Reading

[1] LHCb’s momentous metamorphosis (CERN Courier, January 2019)

[2] ALICE and LHCb upgrade their data centres (CERN Home website, May 2019)